| .github/ISSUE_TEMPLATE | ||

| dataset | ||

| eval_configs | ||

| examples | ||

| examples_v2 | ||

| figs | ||

| minigpt4 | ||

| prompts | ||

| train_configs | ||

| CODE_OF_CONDUCT.md | ||

| demo_v2.py | ||

| demo.py | ||

| environment.yml | ||

| LICENSE_Lavis.md | ||

| LICENSE.md | ||

| PrepareVicuna.md | ||

| README.md | ||

| SECURITY.md | ||

| train.py | ||

MiniGPT-4 and MiniGPT-v2

King Abdullah University of Science and Technology

💡 Get help - Q&A or Discord 💬

News

[Oct.13 2023] Breaking! We release the first major update with our MiniGPT-v2

[Aug.28 2023] We now provide a llama 2 version of MiniGPT-4

Online Demo

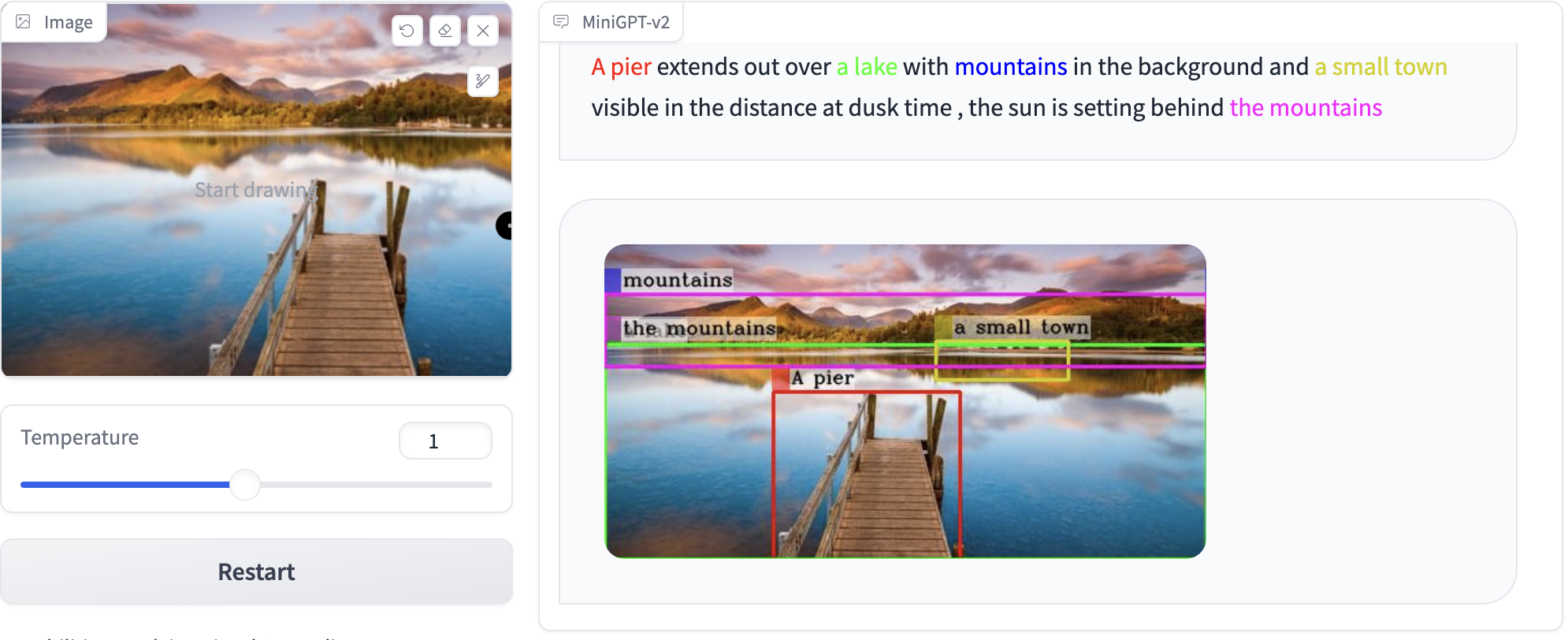

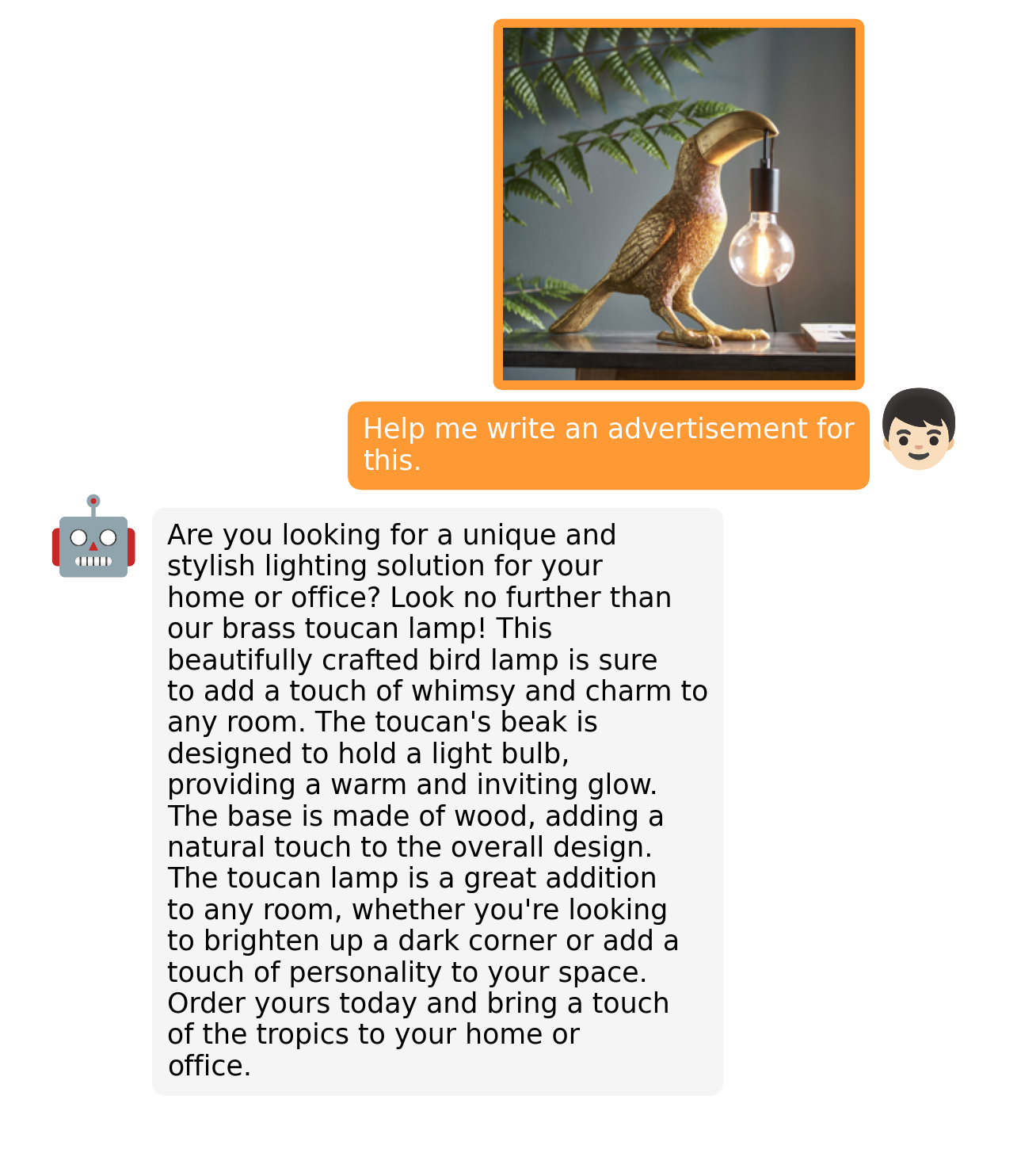

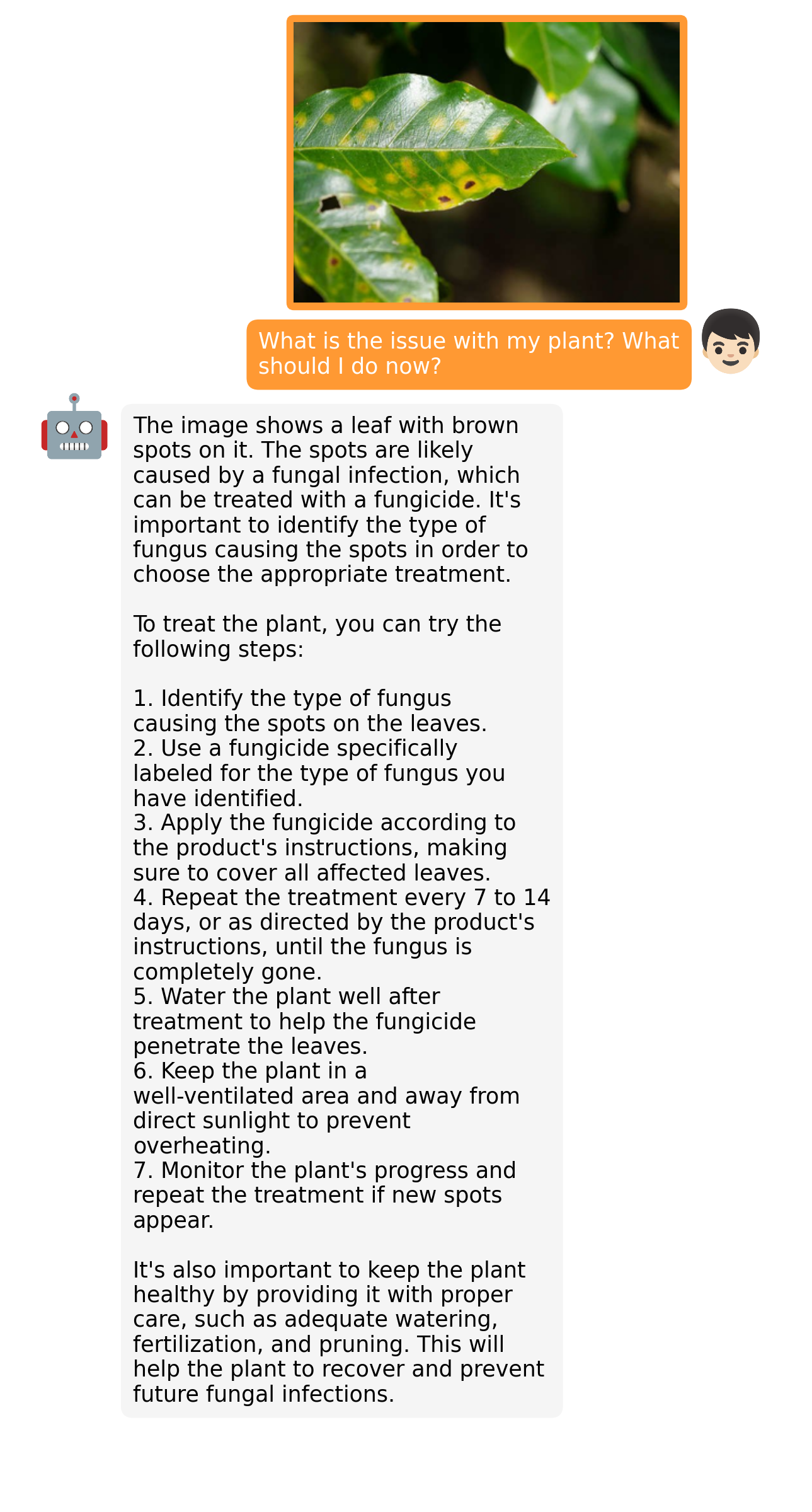

Click the image to chat with MiniGPT-v2 around your images

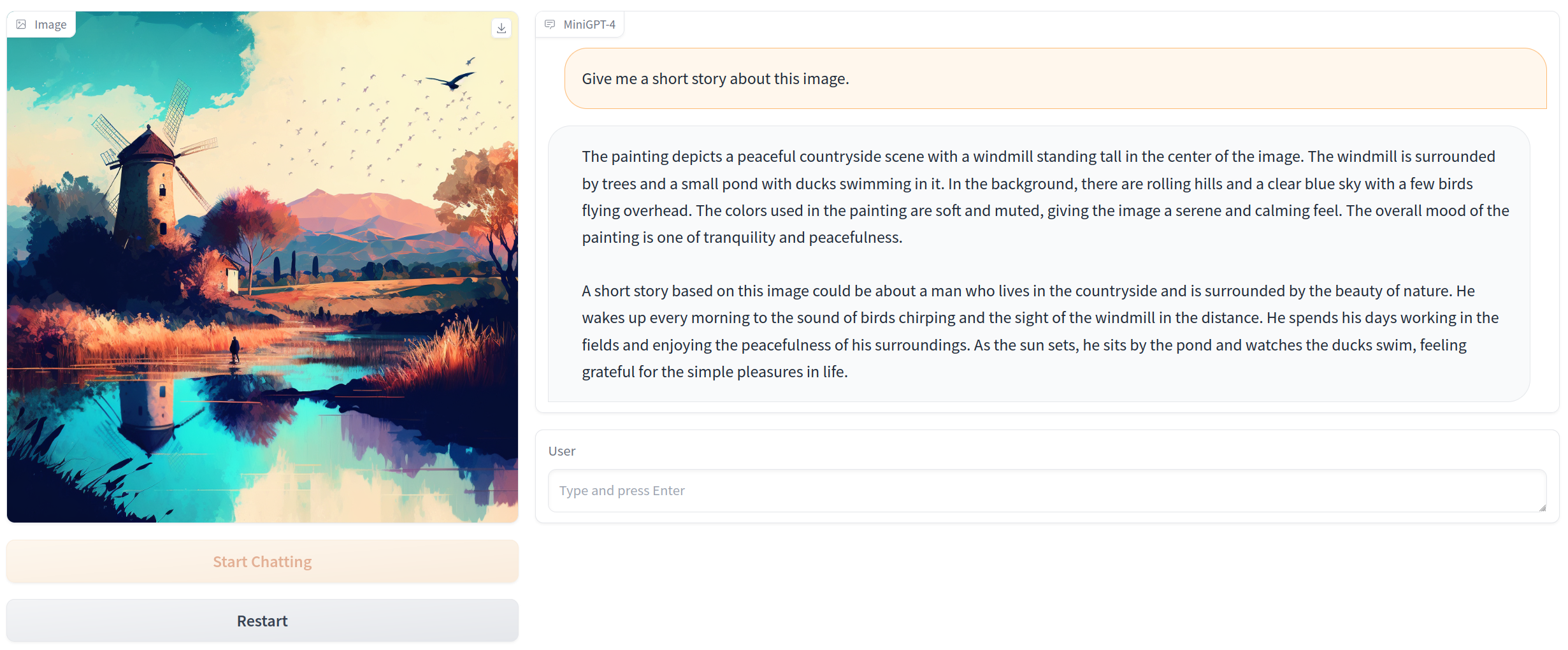

Click the image to chat with MiniGPT-4 around your images

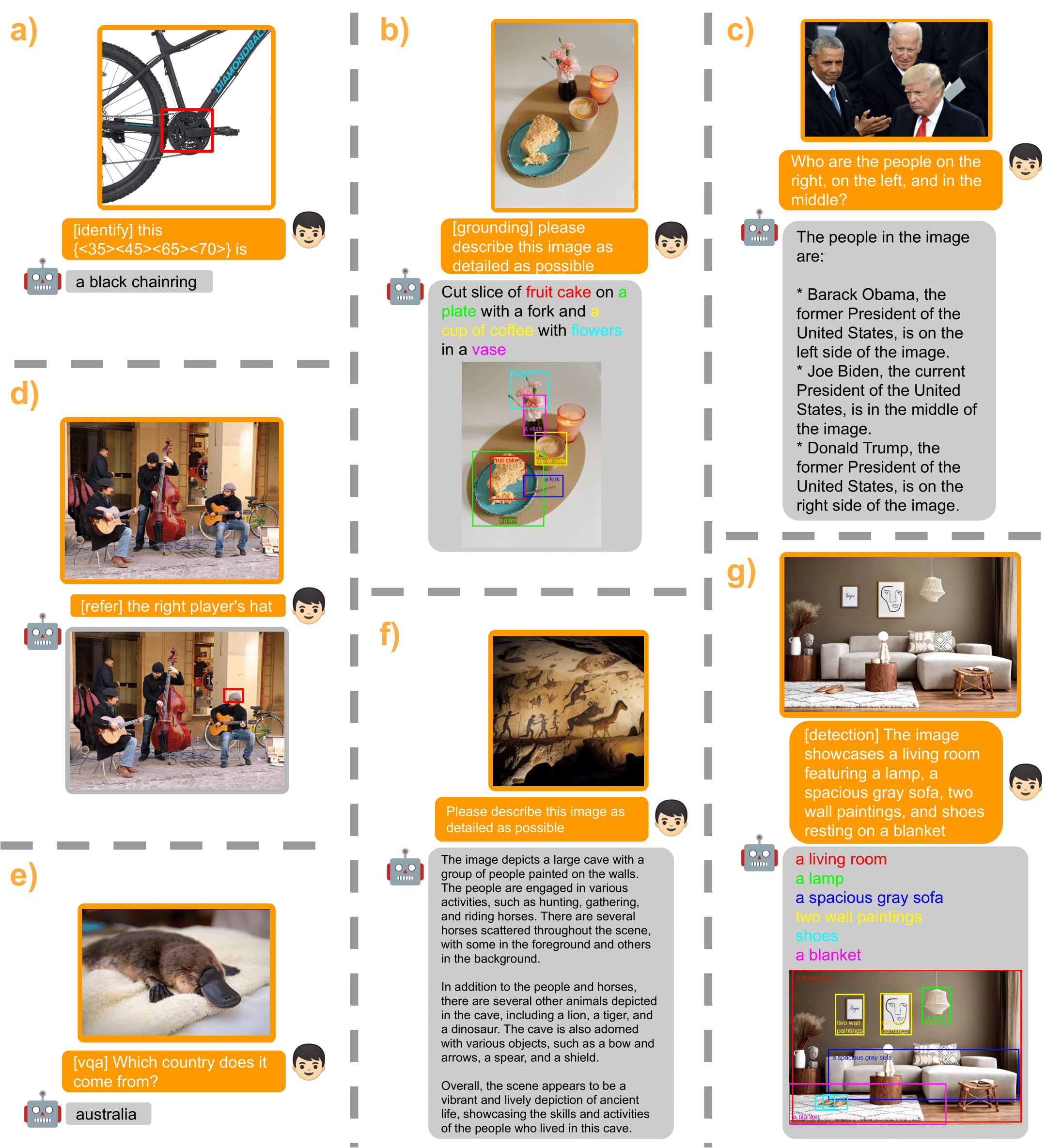

MiniGPT-v2 Examples

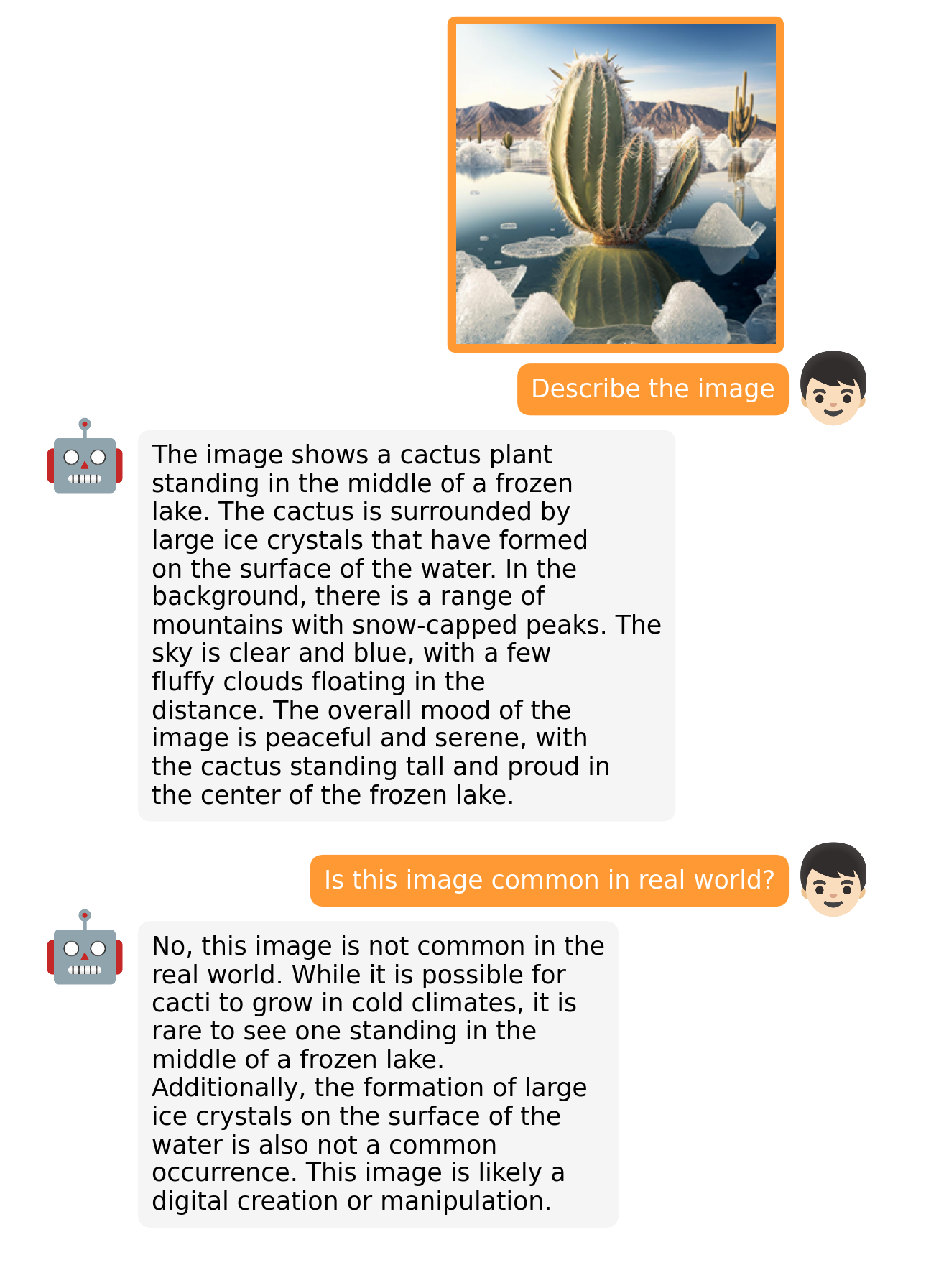

MiniGPT-4 Examples

|

|

|

|

More examples can be found in the project page.

Getting Started

Installation

1. Prepare the code and the environment

Git clone our repository, creating a python environment and activate it via the following command

git clone https://github.com/Vision-CAIR/MiniGPT-4.git

cd MiniGPT-4

conda env create -f environment.yml

conda activate minigpt4

2. Prepare the pretrained LLM weights

MiniGPT-v2 is based on Llama2 Chat 7B. For MiniGPT-4, we have both Vicuna V0 and Llama 2 version. Download the corresponding LLM weights from the following huggingface space via clone the repository using git-lfs.

| Llama 2 Chat 7B | Vicuna V0 13B | Vicuna V0 7B |

|---|---|---|

| Download | Downlad | Download |

Then, set the path to the vicuna weight in the model config file here at Line 18 and/or the path to the llama2 weight in the model config file here at Line 15.

3. Prepare the pretrained model checkpoints

Download the pretrained checkpoints

| MiniGPT-v2 (LLaMA-2 Chat 7B) |

|---|

| Download |

For MiniGPT-v2, set the path to the pretrained checkpoint in the evaluation config file in eval_configs/minigptv2_eval.yaml at Line 8.

| MiniGPT-4 (Vicuna 13B) | MiniGPT-4 (Vicuna 7B) | MiniGPT-4 (LLaMA-2 Chat 7B) |

|---|---|---|

| Download | Download | Download |

For MiniGPT-4, set the path to the pretrained checkpoint in the evaluation config file in eval_configs/minigpt4_eval.yaml at Line 8 for Vicuna version or eval_configs/minigpt4_llama2_eval.yaml for LLama2 version.

Launching Demo Locally

For MiniGPT-v2, run

python demo_v2.py --cfg-path eval_configs/minigpt4v2_eval.yaml --gpu-id 0

For MiniGPT-4 (Vicuna version), run

python demo.py --cfg-path eval_configs/minigpt4_eval.yaml --gpu-id 0

For MiniGPT-4 (Llama2 version), run

python demo.py --cfg-path eval_configs/minigpt4_llama2_eval.yaml --gpu-id 0

To save GPU memory, LLMs loads as 8 bit by default, with a beam search width of 1.

This configuration requires about 23G GPU memory for 13B LLM and 11.5G GPU memory for 7B LLM.

For more powerful GPUs, you can run the model

in 16 bit by setting low_resource to False in the relevant config file

(MiniGPT-v2: minigptv2_eval.yaml; MiniGPT-4 (Llama2): minigpt4_llama2_eval.yaml; MiniGPT-4 (Vicuna): minigpt4_eval.yaml)

Thanks @WangRongsheng, you can also run MiniGPT-4 on Colab

Training

For training details of MiniGPT-4, check here. The training of MiniGPT-4 contains two alignment stages.

1. First pretraining stage

In the first pretrained stage, the model is trained using image-text pairs from Laion and CC datasets to align the vision and language model. To download and prepare the datasets, please check our first stage dataset preparation instruction. After the first stage, the visual features are mapped and can be understood by the language model. To launch the first stage training, run the following command. In our experiments, we use 4 A100. You can change the save path in the config file train_configs/minigpt4_stage1_pretrain.yaml

torchrun --nproc-per-node NUM_GPU train.py --cfg-path train_configs/minigpt4_stage1_pretrain.yaml

A MiniGPT-4 checkpoint with only stage one training can be downloaded here (13B) or here (7B). Compared to the model after stage two, this checkpoint generate incomplete and repeated sentences frequently.

2. Second finetuning stage

In the second stage, we use a small high quality image-text pair dataset created by ourselves and convert it to a conversation format to further align MiniGPT-4. To download and prepare our second stage dataset, please check our second stage dataset preparation instruction. To launch the second stage alignment, first specify the path to the checkpoint file trained in stage 1 in train_configs/minigpt4_stage1_pretrain.yaml. You can also specify the output path there. Then, run the following command. In our experiments, we use 1 A100.

torchrun --nproc-per-node NUM_GPU train.py --cfg-path train_configs/minigpt4_stage2_finetune.yaml

After the second stage alignment, MiniGPT-4 is able to talk about the image coherently and user-friendly.

Acknowledgement

- BLIP2 The model architecture of MiniGPT-4 follows BLIP-2. Don't forget to check this great open-source work if you don't know it before!

- Lavis This repository is built upon Lavis!

- Vicuna The fantastic language ability of Vicuna with only 13B parameters is just amazing. And it is open-source!

- LLaMA The strong open-sourced LLaMA 2 language model.

If you're using MiniGPT-4 in your research or applications, please cite using this BibTeX:

@article{Chen2023minigpt,

title={MiniGPT-v2: Large Language Model as a Unified Interface for Vision-Language Multi-task Learning},

author={Chen, Jun and Zhu, Deyao and Shen, Xiaoqian and Li, Xiang and Liu, Zechu and Zhang, Pengchuan and Krishnamoorthi, Raghuraman and Chandra, Vikas and Xiong, Yunyang and Elhoseiny, Mohamed},

journal={github},

year={2023}

}

@article{zhu2023minigpt,

title={MiniGPT-4: Enhancing Vision-Language Understanding with Advanced Large Language Models},

author={Zhu, Deyao and Chen, Jun and Shen, Xiaoqian and Li, Xiang and Elhoseiny, Mohamed},

journal={arXiv preprint arXiv:2304.10592},

year={2023}

}

License

This repository is under BSD 3-Clause License. Many codes are based on Lavis with BSD 3-Clause License here.